Somewhere right now a team is celebrating how fast their new AI tool is producing output.

They have no idea how much time they're about to spend cleaning it up.

The model is fast as hell. Confidently wrong, but fast.

And it looks like progress until someone has to check it, then someone has to fix the tone, then legal has to rewrite it, then a manager has to step in because the answer sounds right but isn't actually usable.

You didn't automate the work. You just moved it to three different people's calendars and called it progress.

And this is the part nobody talks about when they're selling you the tool.

AI benchmarks measure how a model performs in a lab. Clean inputs, good conditions, and Ideal scenarios.

Your business is nothing like that.

Your business runs on half-clean data, weird edge cases, people who are already stretched thin, approval chains that make no sense, and handoffs that break constantly.

That's the reality AI has to survive in. And most of the time, nobody tests for that before onboarding the tool.

MIT Technology Review published something on this recently that's worth reading. The core of it is:

Most benchmarks measure task performance in isolation. Real performance happens inside teams and workflows over time. That's why so many tools win the pitch and fall apart three weeks into actual use.

It's like hiring someone because they crushed the interview.

Then finding out they cannot function without someone holding their hand every step of the way.

So what should you actually be testing?

Forget the demo.

Run a workflow trial on one real process:

Sales follow-up

Customer support

Proposal drafting

Something that actually matters to your business. Not the idealised version of it, the real messy version.

Then watch four things.

Speed

Not how fast the model responds. How fast the full workflow gets done start to finish.

Because if the model spits out an answer in ten seconds and then someone spends twenty minutes verifying it, you didn't save anything, you just have faster mistakes.

Errors

Not just the obvious ones. The subtle ones that sneak through:

Hallucinations

Wrong context

Bad judgment calls

Anything that forces a human to catch it downstream.

Adoption

Is your team actually reaching for it after the first week or are they going back to the old way the second nobody's watching?

Real adoption means the tool earned its place in the company.

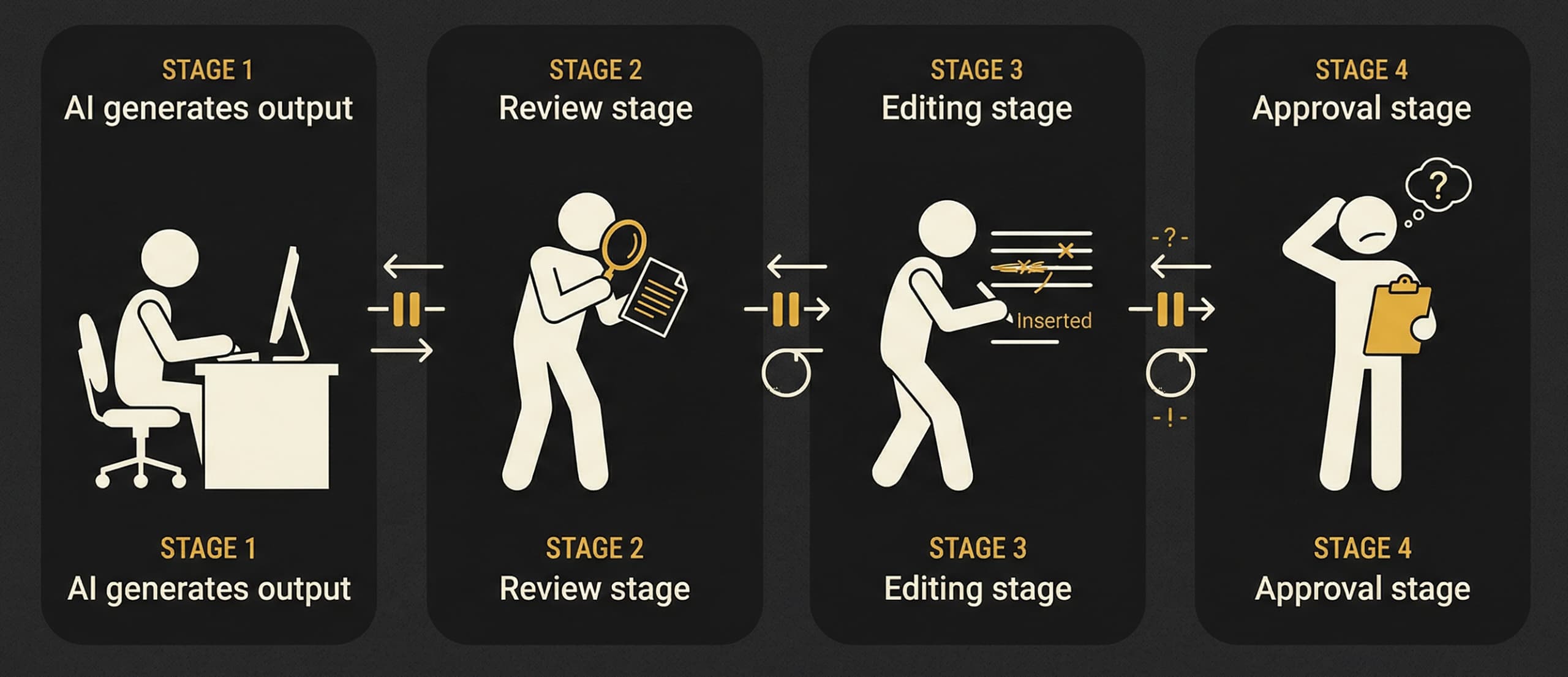

Downstream cleanup.

This one gets ignored constantly.

What happens after the AI output leaves step one? Does someone need to reformat it? Rewrite it? Fix the tone? Step in because it sounds plausible but is actually wrong?

That cleanup is where most of your supposed ROI disappears.

You thought you were building leverage. You just turned it into a polished mess someone else has to deal with.

If I were running an AI rollout right now I'd do something boring and unglamorous.

Pick one narrow workflow. Run the old version and the AI version side by side for two weeks.

Compare the actual numbers. Completion time. Error corrections. How often people use it. How much cleanup it creates after. Then decide from there.

Not from a leaderboard, or a founder telling you their model beats GPT on a benchmark you will genuinely never use in your actual business.

From the workflow. Because that's the only thing that tells the truth.

And if you're building an AI product, stop leading with intelligence as the value proposition.

Nobody cares how smart your model is. They care whether their work gets easier, faster, and less error-prone. That's the product. Everything else is just a pitch.

Buy AI because the work gets better. Not because the score looked good.

Navin